Cloud vs Mac Studio: the hybrid split, and why both

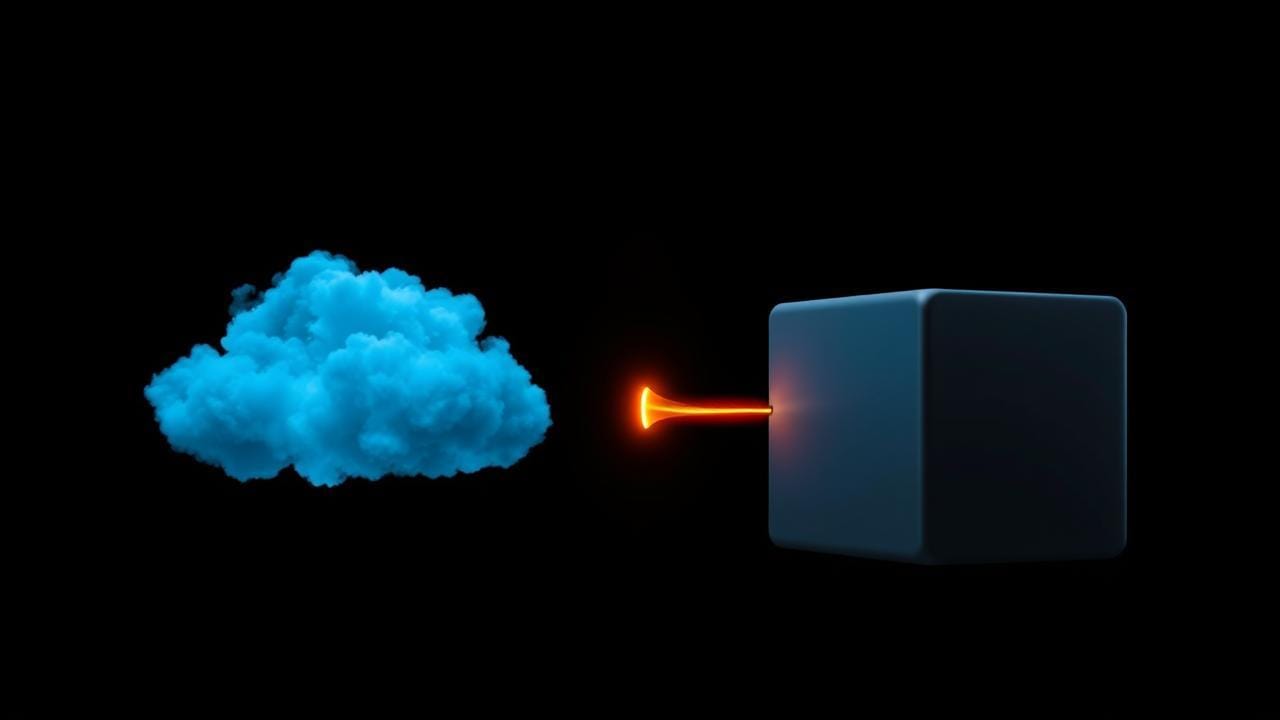

Customer-facing belongs in the cloud. Training, batch, and eval belong on the desk. Here's why running both costs less and works better.

Imagine you're running a small bakery. You have a storefront on a busy street. That's where customers come in. That's where you have to be open at predictable hours. That's where every cost is variable and depends on how many people walk through the door. You also have a kitchen. The kitchen doesn't need to be on the busy street. The kitchen needs to be wherever the rent is cheap and the ovens fit. You can spend big money on the storefront because that's where the customer experience happens, and save big money on the kitchen because nobody sees it.

This is, almost exactly, how I think about AI infrastructure.

The customer-facing part of an AI product belongs in the cloud. It has to be fast, it has to be available, it has to scale up when a customer asks something and back down when they don't, and it has to live behind a public URL with proper auth and compliance. AWS is good at that. So is GCP, Azure, any of the big platforms. They're expensive per unit of compute, but you only pay when the customer is actually there.

The kitchen, training new models on captured judgment, running nightly batch jobs, evaluating which prompt version is better, transcribing voice notes, generating internal marketing assets, that doesn't belong in the cloud. That belongs on a machine on your desk. A Mac Studio with enough memory and a fast chip is, right now, the best per-dollar AI workhorse you can buy for the kitchen work. Capital cost up front, then the marginal cost of running a job is the electricity to keep it on.

The thing I want to convince you of in this piece is that running both is cheaper, better, and easier than running either alone, and that the split between them is not an aesthetic choice. It falls out of the work itself.

This is piece 3 in the series. The previous two were about the MVP question and capturing the secret sauce. This one zooms out and shows where each piece of the stack lives. The next two will go deep on each side.

What goes in the cloud and why

The cloud side is for things where you can't predict when the work will happen, the work has to happen fast when it does, and the customer notices if it doesn't.

Specifically:

- The customer API. When a customer hits your endpoint, the response has to come back in seconds. You can't have it wait while a machine on a desk somewhere boots up. Cloud functions are ready in milliseconds.

- Auth and identity. The customer's identity has to be verifiable from anywhere on the internet. You can't run that on a residential connection that might go down because a tree fell on a power line.

- The live data store. Customer data has to be backed up, replicated, audited, encrypted, compliant with the things your customers' compliance teams care about. Managed databases do this for you. Self-hosting it on a Mac Studio is technically possible and operationally a nightmare.

- The customer-facing inference calls. When the customer asks the AI a question, the model that answers has to be available now. The cloud providers offer hosted models (Bedrock for AWS, with Claude variants and Llama variants and a few others) that you pay per token. Per-token pricing is high, but the model is hot and the latency is low and you don't have to operate anything. (This is called managed inference, if you want to look it up later.)

- The storage that has to be highly available. S3 (or its equivalents) is so cheap and so durable that there's no reasonable argument for not using it as your artifact store. Model weights, training data, eval sets, customer-uploaded files, that all lives in S3.

The shorthand version: anything the customer experiences directly, anything that needs to be available 24/7 at low latency, and anything that needs to scale elastically with customer demand. That's cloud.

What goes on the Mac Studio and why

The local side is for work that's predictable enough that you can run it on a fixed-size machine, batched enough that it doesn't need to respond in seconds, and yours enough that you'd rather not pay rent on it.

Specifically:

- Fine-tuning models on the captured judgment. This is the work that turns the consultant's annotated examples and decision rules into a small custom model. It's GPU-intensive but it doesn't happen continuously. It happens once a week, maybe, when there's enough new captured material to bother. A Mac Studio with unified memory can do this for the size of models that actually fit a single-consultant secret sauce, and the per-job cost is the electricity it draws while running.

- Running eval sets. When you change a prompt or swap a model, you want to run it against a fixed set of golden examples and see what changed. That's a batch job. It's not customer-facing. It can wait a few minutes. The cloud equivalent would charge you per token for every eval run, which adds up fast when you're iterating.

- Internal batch jobs. Summarizing the week's customer interactions. Transcribing voice notes from the consultant into training data. Generating illustrations for the marketing site. (This last one is where local image generation, called diffusion if you want to look it up later, is a quiet superpower, generating a few hundred site assets in an afternoon costs nothing on a Mac Studio, costs real money on a cloud provider.)

- Internal tooling. The dashboards the consultant uses, the queue they review submissions in, the audit explorer. These don't have to be public. They can live on a local server behind a VPN or a tunnel. Cloud-hosting them is a waste of money.

The shorthand version: anything that's batch, anything that's predictable, anything that's internal, and anything that involves running a model a lot, like during iteration, where the per-token cost would otherwise eat your margin alive.

_Why both, specifically

Here's where I get pushy. People keep asking me whether they can run all of it in the cloud or all of it locally, and the answer in both directions is "you can, and you'll regret it."

All cloud means you pay AWS for every eval run, every fine-tune, every batch summary, every internal tooling page-view. The cost graph for that goes up and to the right linearly with your iteration speed. The faster you want to improve the product, the more it costs you to improve the product. That's a perverse incentive. The team starts slowing down to save money, which is exactly the wrong direction for a young product.

All local means your customer-facing endpoint depends on the Mac Studio on your desk being up, the residential ISP being up, the power being on, the machine not being in the middle of a fine-tune when a customer query arrives. You will not pass any meaningful compliance review. You cannot scale beyond your one machine without buying more machines and operating a cluster, at which point you have re-invented cloud computing badly. And the moment you go on vacation, you have to leave the machine on and the apartment cool, and hope nothing goes wrong.

Both means each side does what it's good at. The cloud side never has to do batch work, so its cost stays roughly proportional to customer load. The local side never has to do customer-facing work, so its uptime story is whatever-you-can-manage, and the work it does (training, eval, batch) tolerates a few hours of downtime fine. The model that the cloud side serves gets steadily better because the local side keeps training improved versions and pushing them up to S3. The customer never sees the machinery.

There's a third reason the split is good: iteration speed. When the local side is running on hardware you own, you can try things. Want to re-run a different eval set? Just run it. Want to fine-tune three different sizes of model in parallel to compare them? Just run them. Nobody is metering you. That freedom turns into a much faster product, because the cost of a bad experiment goes from "real money" to "a couple of hours of machine time."

The cost-model deep dive is its own piece, what you pay before customers arrive runs the actual numbers on this split. If you're skeptical of the economics, jump there.

How they actually talk to each other

I'll cover this in detail later in the series, but the short version is the sync pattern between the two sides is simpler than people fear. There's a managed queue (a Simple Queue Service, or SQS) on the cloud side. Local pulls work off it on a schedule. Local pushes results back via S3 uploads or a small API. Trained models go up to S3 as artifacts, and the next time a cloud function spins up, it picks up the new version.

That's the whole hybrid sync pattern. No complicated VPN. No persistent connection. Each side acts on its own schedule, and they share a queue and a bucket. It is genuinely hard to mess up.

For the wiring detail, what queues, what events, what artifacts, I write that up in the hybrid sync pattern. Read it when you're ready to build the connecting tissue.

How I actually run it

Quick reality check, since this isn't theoretical for me. I run this exact split on my own homelab. Three nodes, engine-01 does the fine-tunes and image generation, core-01 runs the batch jobs and eval harness, store-01 holds the artifact mirror. They're not load-balanced, they're roles. Engine-01 is the one with the most GPU memory. Core-01 is the one I trust to run unattended for a week. Store-01 is the one with the most disk. The cloud side of the products I work on lives in AWS, and the sync pattern between them is exactly what I described above. SQS for work, S3 for artifacts.

The three-node setup is overkill for an early-stage product. One Mac Studio does fine. I have three because I have a few different things in flight. If you're building one MVP, you need one machine. The cost is a few thousand dollars up front and then basically nothing, and that machine will pay itself back against cloud bills inside three months of normal iteration. (For the actual cost math, the Mac Studio side of the stack is the piece that breaks it down.)

What I want you to take from this

The split between cloud and local is not a tooling preference. It's a recognition that two completely different kinds of work (customer-facing real-time stuff and iteration-heavy batch stuff) have completely different cost profiles, completely different uptime requirements, and completely different scaling shapes. Putting them on the same infrastructure means one side compromises for the other.

Cloud for the storefront. Mac Studio for the kitchen. Each side does what it's good at, and they talk through a queue and a bucket.

The next piece is the AWS-native shape, the actual box-and-line diagram for the cloud side, with the specific managed services I'd start with on day one. After that, the Mac Studio side, with what I'd install and what it'd cost.