Auth and multi-tenancy from day one

The cheapest day-one decision is also the most expensive one to defer: auth, tenant scoping, and row-level security wired in before you have a single customer.

For small teams and small organizations — frugal stacks, team-of-one governance, small-shop AI

The cheapest day-one decision is also the most expensive one to defer: auth, tenant scoping, and row-level security wired in before you have a single customer.

mflux, ollama/mlx-lm, fine-tuning, whisper, a batch runner, what runs on the desk, and the math on why it pays for itself in months.

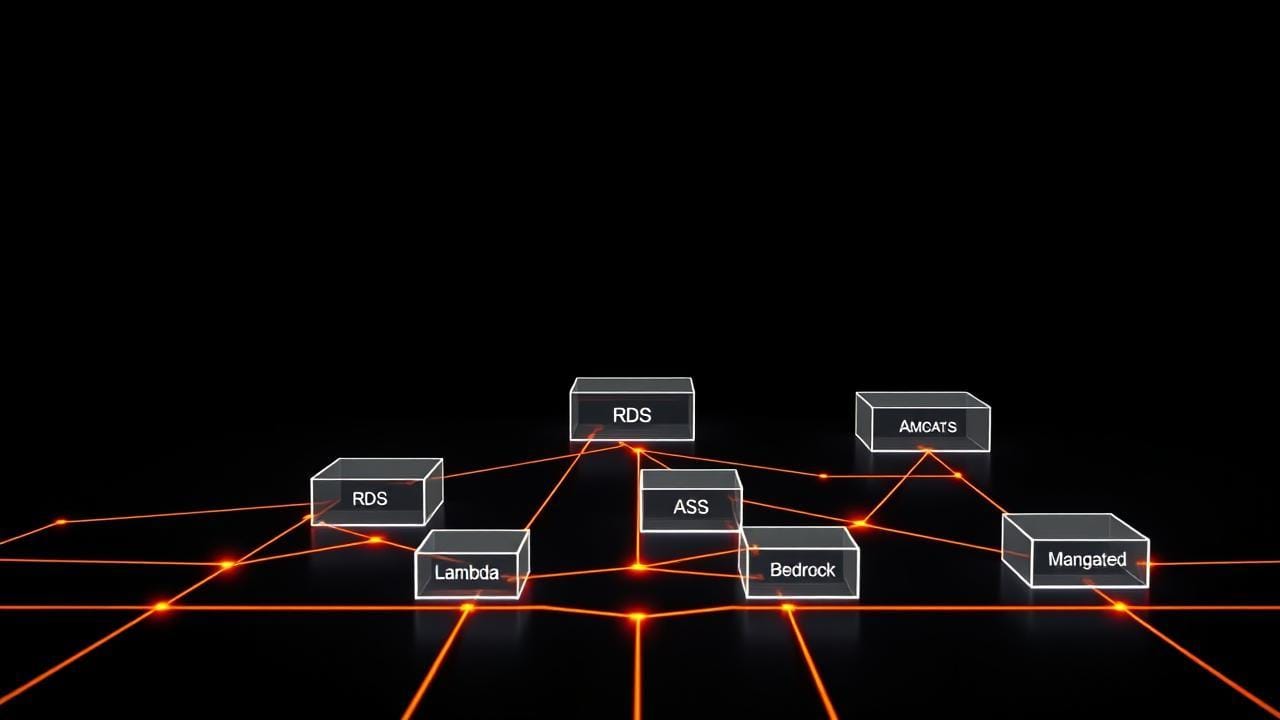

API Gateway, Lambda, Cognito, RDS+pgvector, Bedrock, S3, EventBridge, SQS, CloudFront, CloudWatch. The smallest cloud shape that actually ships.

Customer-facing belongs in the cloud. Training, batch, and eval belong on the desk. Here's why running both costs less and works better.

Captured judgment is not a model and it's not a knowledge base. It's the small set of things the consultant has personally learned from being wrong.

Most AI MVPs over-build the model and under-build the product loop. Here's the question that fixes that, and the eighteen pieces that follow it.

What I'd actually give a 5, 50 person org getting serious about AI in mid-2026. A hosted+local hybrid stack, the governance scaffolding to make it safe, and the cost numbers I'd budget against. Concrete picks, not a vendor matrix.

Sub-100-person organizations have different AI procurement constraints than enterprises, tighter budgets, less vendor leverage, less in-house governance, often more sensitive data. Here's the procurement frame that actually fits the shape of those orgs.

Enterprise AI governance frameworks don't scale down to a solo operator, and yet governance is one of the four positions I keep coming back to. Here's the lightweight-but-real version I actually run, and why each piece earns its keep when nobody else is going to ask.

Apple Silicon is the most defensible inference platform a small shop can buy in 2026. Not because it beats H100s on absolute throughput, it doesn't, but because the unified-memory architecture, MLX maturity, and capex-vs-opex math all line up for the workloads small shops actually run.

A pragmatic playbook for small organizations adopting AI in 2026, where to start, what not to do, how to build governance early without grinding the work to a halt, and how to think about tools and data before vendor lock-in does it for you.

NotebookLM was the consumer surface that made the team-second-brain pattern legible. The pattern itself is older and the builds that work in production are usefully different from the consumer demo. Worth pulling the thread.