Memory isolation is the whole point

Long-term memory has to be persona-scoped from day one. Otherwise the AI brings up the wrong thing at the worst possible moment, and you stop trusting it.

If I had to pick the single feature of an AI system that determines whether people end up trusting it or quietly turning it off, it wouldn't be the model. It wouldn't be the tool layer. It wouldn't even be the latency, though I have opinions about that too. It would be memory. Specifically, what the AI remembers about you, and when it brings that memory back up.

Memory is the part people fall in love with and the part that betrays them. The first few weeks with a system that remembers feel like meeting a colleague who genuinely listens. "Oh right, you mentioned that last month, how did it land?" That landing strip of recognition is real, and it's why long-term memory is the single biggest UX leap from "chatbot" to "assistant." But the same feature that makes the system feel alive makes it terrifyingly easy to misfire. The AI mentions a personal-life concern during a quarterly business review prep session. The AI brings up the work crisis during a quiet weekend conversation about a planned trip. The AI conflates two distinct names from the household side because both ended up in the same memory pool. Each of these moments isn't a bug in the model. It's a bug in where memory lives.

Which is why I want to make the strongest claim in this whole series here, in this one piece: long-term memory MUST be persona-scoped, or the whole framework collapses. There is no version of "persona-aware AI" that does tools and prompts and identity correctly but lets memory float in one big bucket. That single shortcut undoes every other discipline. Memory is the whole point.

What goes wrong when memory is shared

Let me back up a half-step and describe the failure mode plainly, because I don't think enough people have lived with it long enough yet to feel how bad it is.

The setup: you've been using your AI assistant for six months. You've told it about your work in detail, projects, people, frustrations, wins. You've told it about your personal life and household in detail, the schedules, the recurring concerns, the soft spots of the year. You've talked to it about a side project, about hobbies, about things you wouldn't necessarily say out loud at a dinner party. All of this lands in long-term memory, which the AI is dutifully extracting and storing, because it's been told that's helpful.

Now, you sit down on a Monday morning and start a work session. You ask it for help drafting an email to a peer on another team. And mid-draft, the AI helpfully adds context: "Given that you've been concerned about a household issue lately, you might want to wrap this up before your afternoon block, should I keep it short?"

That is a real thing that can happen, and it is a complete trust break. The information was correct. The retrieval was technically working. The model isn't broken. The room is wrong. The work persona has no business reaching into the personal persona's memory. The fact that it can means the whole envelope of "personal" isn't real. It's a label on a folder in one big shared bucket, and the bucket leaks.

This is what people mean when they say "the AI got confused about which kid I was talking about." It's not a confusion bug. It's a scope bug. The persona-scoping wasn't there at the storage layer, so the retrieval pulled from everything, so the answer mixed things that should never have been adjacent.

Why the fix has to be structural

The reflex, when this kind of leak happens, is to patch it at the model. Add a system prompt: "do not mention personal-context items when in work mode." Add a filter: scan the model's output for personal-sounding phrases and rewrite them. These approaches will work three times out of four and fail in the way you fear the fourth time. They don't address the actual problem.

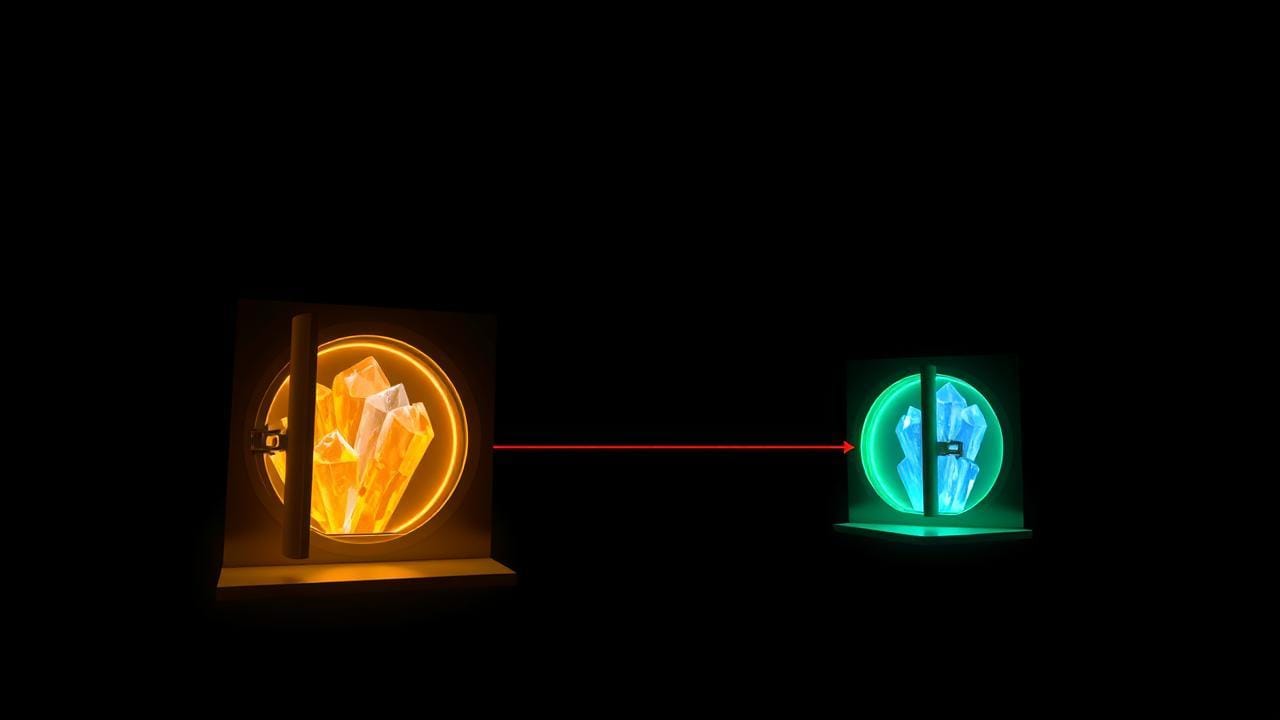

The actual problem is that the memory store is not the right shape. A persona is supposed to be a container, for what the AI sees, knows, can act through, and represents you as. Memory is at the very middle of that. If the Family persona has its own memory and the Work persona has its own memory, and there's no path from one to the other except by an explicit, audited operation, then the failure mode I just described becomes structurally impossible. The Work persona doing a memory retrieval is searching the Work persona's memory. There is no "everything I know about you" bucket to dredge.

This sounds heavy-handed until you realize it's exactly how a thoughtful colleague behaves. The friend who's been to your house and knows the household side of your life doesn't pull from that context in a board meeting. Not because they're suppressing it, they just genuinely aren't operating from that part of their relationship with you in that moment. Different room. Different memory in mind. The structural version of that, for an AI, is: per-persona memory stores, and no automatic cross-talk.

I went deeper on the "the persona is a container, not a costume" idea in A persona is a container, not a costume. Memory is the heaviest thing in that container, if it isn't scoped, the container is just a label.

The three audiences, one rule

The shape doesn't change between personal use, small business, and enterprise. What changes is what gets leaked when it goes wrong, and how loud the failure is.

For a personal user, this is the dignity issue. The Family persona knows the household's standing facts, schedules, contacts, the recurring stuff that keeps the home running. The Personal persona, the one I use for everything from buying running shoes to drafting emails to old friends, knows different things. The Hobby persona, the one used for a long-running side project, knows still other things. Each of these memories should stay where it lives. The Family persona is not allowed to reach into the Hobby persona's memory to make small talk while it's helping with a household task. Not because either is "secret", it's that the shape of the conversation has to match the shape of the room you're in. Mixing them just makes everything feel slightly off.

If you run a small business, this gets sharper fast. The business persona knows your clients, what they're working on, what they're sensitive about, what you've heard about their internal politics. The personal persona knows your friend group, your finances, your unfiltered opinions about a few of your clients on the worst days. Sharing memory across these isn't a leak risk in the dramatic sense, there's no breach. It's a judgment risk. The AI, helpful as ever, mentions a client's name in a personal context. It uses your unfiltered framing of a project when you're drafting the actual client email. Both of these are career-ending small mistakes. Persona-scoped memory is the only structural way to make them impossible.

In an enterprise, this is the difference between memory being a feature and memory being a regulatory liability. The Sales persona knows customer information governed by your privacy regime. The HR persona knows employee information governed by an entirely different one. The Engineering persona knows code, designs, internal decisions. If your AI's long-term memory is one big store with all of this in it, the answer to "what's the data classification of this memory store?" is "the highest classification of any item in it", and that means a developer's general-purpose chat is suddenly operating under the same controls as your most sensitive system. That's not just unworkable. It's the kind of thing that gets the program shut down. Persona-scoped memory keeps each store in its own scope, with its own controls, audited as its own thing.

Cross-persona access exists, but it has to be loud

I want to be careful not to say "cross-persona memory access never happens." Of course it sometimes has to. The Family persona has to know that the user is on call this weekend, because that affects the family schedule. The Work persona has to know that the user has a doctor's appointment Wednesday afternoon, because that affects the meeting cadence. These crossings aren't pathological. They're real and necessary.

What makes the difference is that they have to be deliberate, audited operations, never a default. When the Family persona pulls a piece of context from the Work persona's memory, that crossing is an event. It's logged. It's narrow, a specific item, not "all your work memory." It's done with the user's knowledge, in a way they could replay later if they wanted to.

The hard rule I'd argue for is this: by default, a retrieval inside a persona only touches that persona's memory store. Any time a query reaches outside. That's an explicit, policy-governed operation, with a record. No back doors. No "we'll just join the indexes for performance reasons." No "we centralized the embeddings to save cost." The moment you take that shortcut, you've collapsed the framework, because if cross-persona retrieval can happen silently, the framework offers no protection from the failure modes I described at the top of this piece.

I'm deliberately not getting into the specific approach for how the system tracks and approves these crossings; that's a place where I'd rather underclaim. But the policy shape is what matters: cross-persona access exists, is rare, is loud, and is auditable as its own kind of event.

The parts that will bite you

A few places this gets hard in practice.

Memory extraction is the entry point. Memory doesn't appear in the store by magic, it's extracted from conversation. If extraction itself isn't persona-scoped, you've already lost. When the user is in the Family persona, the things being remembered should land in the Family persona's store, full stop. If your extractor writes everything to a global "user memories" table with a persona tag column, and the retrieval honor of that tag is just a query filter, you have created an extremely large surface area for one bug to make every memory cross-accessible at once. Make the stores genuinely separate at the boundary. The discipline is worth the duplication.

Importing memory at persona creation. People will, reasonably, want a new persona to start with some context. Don't make that an automatic "copy everything." Make it a deliberate import, choose what crosses, narrate why, log it. Less convenient. Much safer.

The "search across all my memories" temptation. This will come up. Users will want a feature that searches everything they've ever told the AI. Build it carefully or not at all. If you build it, treat it as an explicit cross-persona operation with the loudest possible UI signal that you're temporarily flattening the wall. Do not make it the default search bar. The friction is the feature.

What I'd ask first

If you're already running an AI assistant with long-term memory for yourself, your team, or your company, the question I'd start with is this: when memory is retrieved during a session, can you point at which persona's store the retrieval came from? If the answer is "all of them" or "I'm not sure," you don't yet have persona-scoped memory. You have one bucket with labels on the items, and the labels won't save you on the day they need to.

The next piece in this series is going to take the same idea further down the stack, into the database itself, where row-level filters on a persona ID make the same shape true at the storage tier. Because the only way persona isolation actually holds is if it's enforced at every layer it touches. A leak at the memory layer is a leak at the memory layer. A leak at the database layer is a leak at every layer. The good news is that the pattern is the same all the way down. The bad news is that you have to actually do it everywhere.