The DeepSeek-R1 reality check, one week later

A week ago, an open-source reasoning model erased about a trillion dollars of market cap. Most of the takes from week one are already wrong. Here's what actually changed.

A week ago today, a Chinese open-source reasoning model erased roughly a trillion dollars of market cap from the US AI infrastructure trade in a single day. By Wednesday the discourse had moved on. By Friday it had moved on twice. Most of what gets written in the first week after a thing like this turns out to be wrong by the second week, which is why this piece waited a beat.

DeepSeek-R1 is not the end of OpenAI. It is not a death blow to NVIDIA. It is not a Sputnik moment for US AI. It is also not nothing. It's the first wide-public datapoint that the moat narrative, the one where you needed seven figures a month and access to the H100 supply chain to play in this league, was always less of a moat than it sounded.

Worth walking through what actually changed.

What changed

The training-cost narrative cracked publicly

The $5M-ish training-cost figure for R1 has been picked apart all week. Some of that scrutiny is fair (the number leaves out a lot of inferential compute that DeepSeek had already paid for in V3). Some isn't, the people loudest about "actually the real cost is $500M when you count everything" are mostly the ones whose business models depend on training costs being prohibitive.

The honest answer is that R1 cost dramatically less than anyone was claiming a frontier reasoning model needs to cost. Not "shaves 20% off the bill" less. Order-of-magnitude less. And the result is competitive on every reasoning benchmark people actually quote.

The "how much does it cost to train a frontier model" question now has a public datapoint that's harder to dismiss than every prior open-weights release.

Reasoning models stopped being a luxury good

Three months ago, OpenAI o1 was $15-per-million in and $60-per-million out. ChatGPT Pro was $200 a month. The implicit market message was that reasoning models (the ones that "think" before they answer) were going to be a premium tier for the foreseeable future.

DeepSeek-R1 is $0.55 in: $2.19 out. Same league of capability. Different planet of pricing.

Not every reasoning workload migrates to R1 tomorrow. There are real questions about latency, residency, the censorship layer in the hosted version, and operational maturity. But if a project was deferred because of o1's pricing, that excuse evaporated this week.

Open weights got a frontier reasoning model

The base R1 weights are on Hugging Face under MIT license. You can pull them down and run them. That's qualitatively different from "you can run a base model and try to RL-tune it yourself." DeepSeek did the work, published the recipe, and shipped the artifact.

The open-weights movement has been catching up to closed frontier models for two years, the LLaMA leak in 2023 was the inflection point, Llama 2's commercial license was the legal one, Llama 3.3 narrowed the gap on conventional capabilities. R1 is the first time the gap has narrowed on reasoning specifically. That's the capability the closed-frontier vendors have been pricing as their crown jewel.

Stargate made the contrast hard to miss

January 20th: DeepSeek-R1 released. Open weights. MIT license. Roughly $5M training run.

January 21st: $500 billion Stargate announcement. Trump, Sam Altman, Masayoshi Son, Larry Ellison on stage. Four years of US AI infrastructure investment.

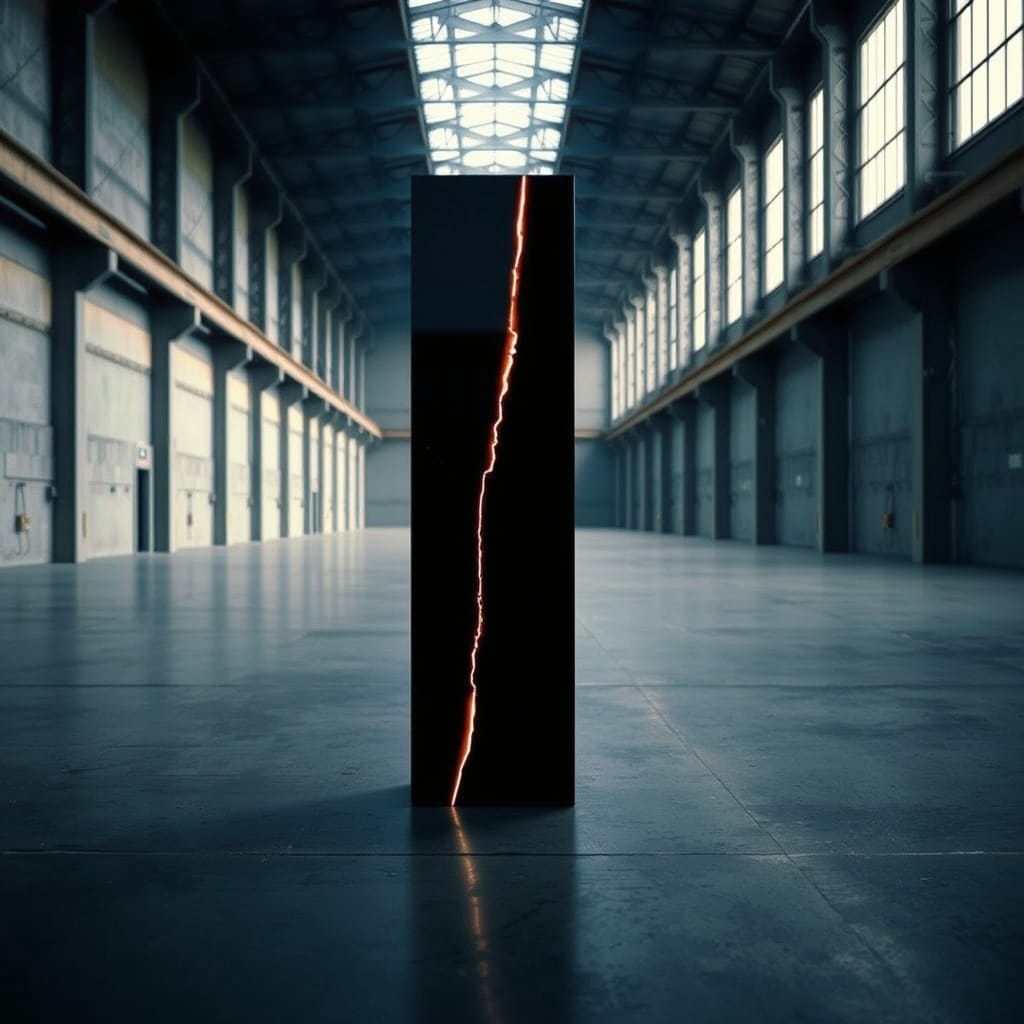

The Stargate number isn't strictly comparable to the R1 number, they answer different questions. But the visual contrast was its own argument. One side announced a result. The other side announced a budget. Reasonable to read those two press conferences in the same week and come away with at least one private "wait, what?"

What didn't change

NVIDIA isn't dead

The market reaction on Monday (somewhere around $600B off NVIDIA's cap in a day) assumed that "we need less compute to train" maps directly to "we need less NVIDIA." That mapping doesn't hold.

What R1 actually demonstrates is that you can do more with less for training. Inference still scales linearly with usage. The thing that's growing fastest in this market is people running these models, not people training them. NVIDIA is still selling the picks and shovels for the part of the gold rush that's actually happening.

The stock half-recovered by Friday, which seems about right.

The closed-frontier shops aren't done

OpenAI, Anthropic, Google all have models that weren't visible in any R1 benchmark. They have user surfaces, distribution, enterprise contracts, and product teams that are several years ahead of where DeepSeek is on shipping. The next round of model releases will be very interesting precisely because the closed-shop margins just got compressed.

Expect more openness from the closed shops, not less. Better pricing tiers. Wider context windows at the cheaper tiers. Possibly some reasoning-model price cuts that wouldn't have shipped six months ago.

"AI is a bubble" took a hit but didn't break

The bear case on AI investment has been "the economics don't work, too much capex, not enough revenue." DeepSeek strengthens that case for one specific corner of it (training). It doesn't really speak to whether the applications people are paying for can support the spend on inference, agents, and product development.

That bear case will be settled separately. It hasn't been this week.

The interesting question that follows

The interesting question that follows isn't which model to use. It's how reasoning capability gets composed into actual workflows now that it's effectively a commodity for most use cases.

The pricing has moved. The narrative has moved. The implicit deal has moved: it's no longer "can-you-afford-it," it's "are-you-paying-attention."