Llama 4 lands: open weights at frontier scale

Llama 4 is the first time Meta has shipped a mixture-of-experts open-weights model at frontier scale. The release is more interesting for what it implies about Meta's strategy than for its benchmark wins.

Meta released Llama 4 over the weekend, which is the first time they've shipped a mixture-of-experts model at frontier scale under an open-weights license. The headline package is two release-ready models and a teaser for a third: Scout (17B active, 109B total parameters), Maverick (17B active, 400B total), and a preview of Behemoth (~2T parameters) that Meta says is still in training and will land later. The licenses are roughly the same posture as Llama 3, usable commercially with some restrictions, including a service-scale clause that blocks the largest cloud-service competitors from offering hosted Llama as a product.

Worth working through what's actually new versus what's narrative, and what the release implies about where the open-weights frontier sits going into the second quarter.

What's actually new

The architectural shift is the genuinely interesting part. Llama 1, 2, and 3 were all dense transformers, every parameter participated in every forward pass. Llama 4 is mixture-of-experts, which means only a fraction of the total parameters are active for any given token. Scout's 17B active out of 109B total is roughly the same active-parameter count as Llama 3 8B but with the ability to specialize across many more parameters when the routing wants to.

The practical consequence: the inference cost (in FLOPs and in memory bandwidth) scales with the active parameters, not the total. Scout running at 17B-active speed but with the full 109B-total knowledge floor is a meaningfully different proposition than a 109B dense model that would cost six times more to run. This is the same architectural bet DeepSeek-V3 was already making (V3 is 671B total / 37B active) and Meta is now publicly committed to the same direction at the open-weights frontier.

Maverick's 17B-active / 400B-total puts it in the same neighborhood as the largest deployed-frontier models on capability, with substantially better economics than dense models in the same total-parameter range. The benchmark numbers Meta published put Maverick competitive with Claude 3.5 Sonnet and GPT-4o on most multilingual and reasoning tasks, with the usual caveats about benchmark gaming.

Behemoth is the marketing piece, a ~2T-parameter teaser meant to signal "we are training at frontier scale and we'll show you the results when it's ready." Whether Behemoth actually lands on its promised timeline is a separate question that I'd file under "see what ships."

What's strategy

The structural read on Meta's release is that they've decided the way to compete with the closed-frontier shops isn't to match them on capability, it's to make hosted-frontier capability commodity infrastructure that any cloud, any startup, any internal team can deploy. The license still has the carve-out that prevents the largest hyperscalers from offering Llama-as-a-service directly, but it permits essentially everyone else. That's a strategic choice with real teeth: it makes Meta's models the default foundation for the long tail of AI-using companies that don't want to be locked into one of three closed-frontier vendors.

The contrast with DeepSeek-R1's release in January is instructive. DeepSeek released their model under MIT, fully permissive, no carve-outs, anyone can deploy it however they want including the hyperscalers. Meta's release is more constrained but comes with the institutional weight of a major US AI lab and a longer track record of releases. Different bets, both putting downward pressure on the closed-frontier business model.

What it changes for self-hosting

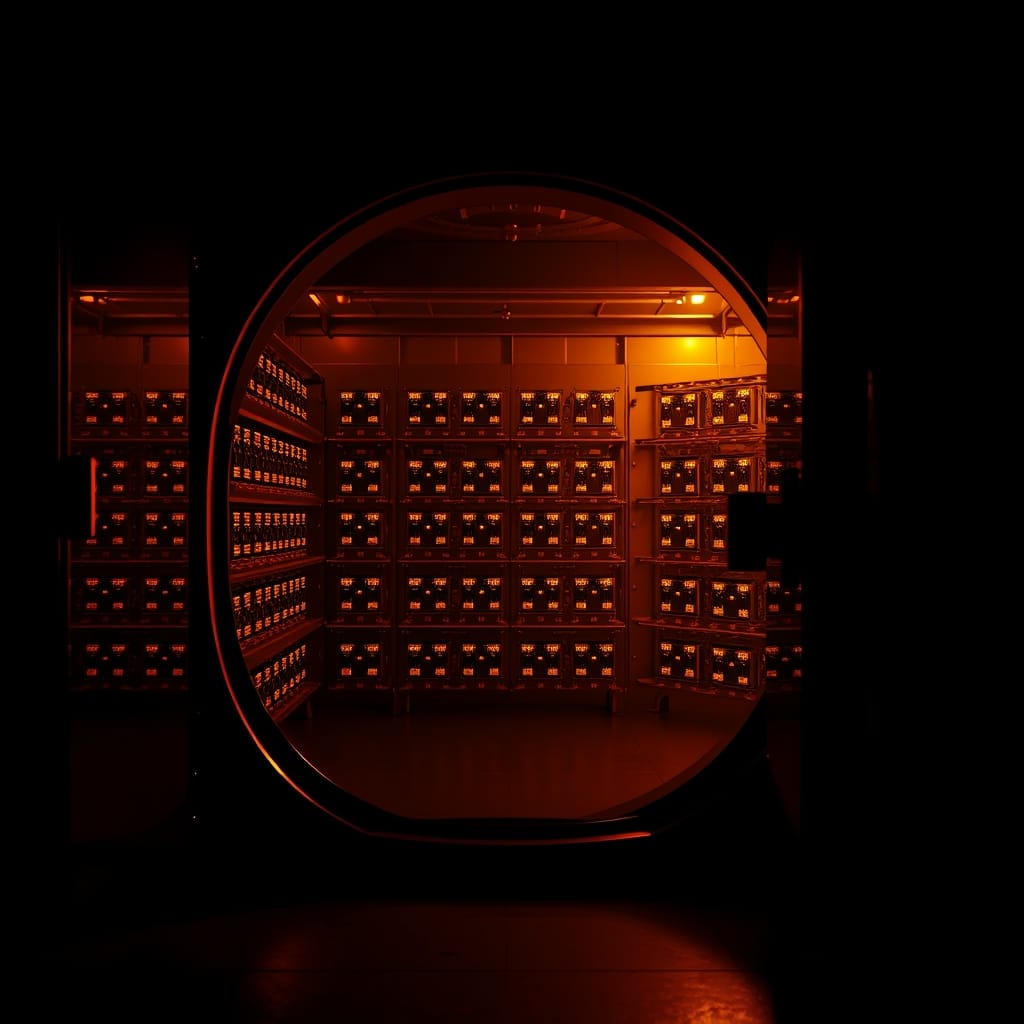

The honest version of what early-2025 self-hosting at home looks like needs an update. Llama 4 Scout's quantized footprint puts it in reach for serious home rigs, at INT4, the model state fits in roughly 60GB, which is workable on a Mac Studio with 64GB+ unified memory or on a two-card NVIDIA workstation. Maverick is harder, at INT4 it's still 200GB+ of model state, and that's well past what consumer hardware comfortably handles.

For most home self-hosters, Scout becomes a practical option. The DeepSeek-R1-Distill 32B was the previous "best model that runs at home for reasoning tasks"; Scout is a real alternative for general-purpose work, with a different shape of strengths. The 70B-class dense models (Llama 3.3 70B in particular) are not obsolete, they still outperform Scout on some specific benchmarks and they're well-understood, but Scout opens up an axis of comparison that wasn't there before.

The MoE architecture has its own quirks for self-hosting. The full model state has to live somewhere, even though only a fraction is active per token, which means memory requirements are higher than the active-parameter count suggests. The benefit is throughput: inference is fast because only the active experts are doing work. For interactive use cases the speedup is real and useful.

What it doesn't change

A few things this release doesn't shift:

- The closed-frontier shops are still ahead on the very hardest reasoning. Llama 4 closes some of the gap on conventional capability benchmarks; it does not appear to outperform o1 or Claude 3.7 with extended thinking on the hardest math/proof-style problems. The open-weights catch-up has been narrowing the gap for a year, but the gap at the top end is still real.

- Long-context behavior is mixed. Meta is claiming up to 10M-token context for Scout (with caveats about effective use), but the practical experience of running long-context inference at home is shaped at least as much by hardware memory as by what the model can in principle handle. The marketing context number and the useful context number are different.

- The license is not as permissive as MIT. For projects where the license matters, DeepSeek-R1 and other MIT-licensed open models are still in a different category. Meta's license is workable for most uses but reading it before committing remains a real step.

- Tooling lag is real. The first 48 hours after a major model release, the inference runtimes are still catching up. Quantization quality varies wildly during the early weeks. If your workload depends on Llama 4 working well, give the tooling a few weeks before judging the model.

The picture going into Q2

Reading the release alongside the LLaMA leak that started this thread two years ago and Llama 2 making the licensing real in 2023, the trajectory is clearer in retrospect than it was at any of the intermediate steps. The pattern is: Meta releases a model that's slightly behind the frontier, the open-source community picks it up and makes it work, the closed-frontier shops respond by pricing or shipping faster, and the floor of "capability you can run for free" rises by another notch.

Llama 4 is the next notch. It's not the model that ends the closed-frontier business, that's not how this has gone, and probably not how it will go. It's another floor-raise, and the cumulative effect of those floor-raises over three years is what makes the current open-weights option real.

The next datapoint is what the closed shops do in response. The pricing pressure that DeepSeek introduced in January is now compounded by Meta entering the same architecture class. The next round of frontier model releases will tell us whether the closed shops adjust pricing or feature mix or both.