GPT-4.5 vs Claude 3.7 vs Gemini 2.0: a cloud architect's take

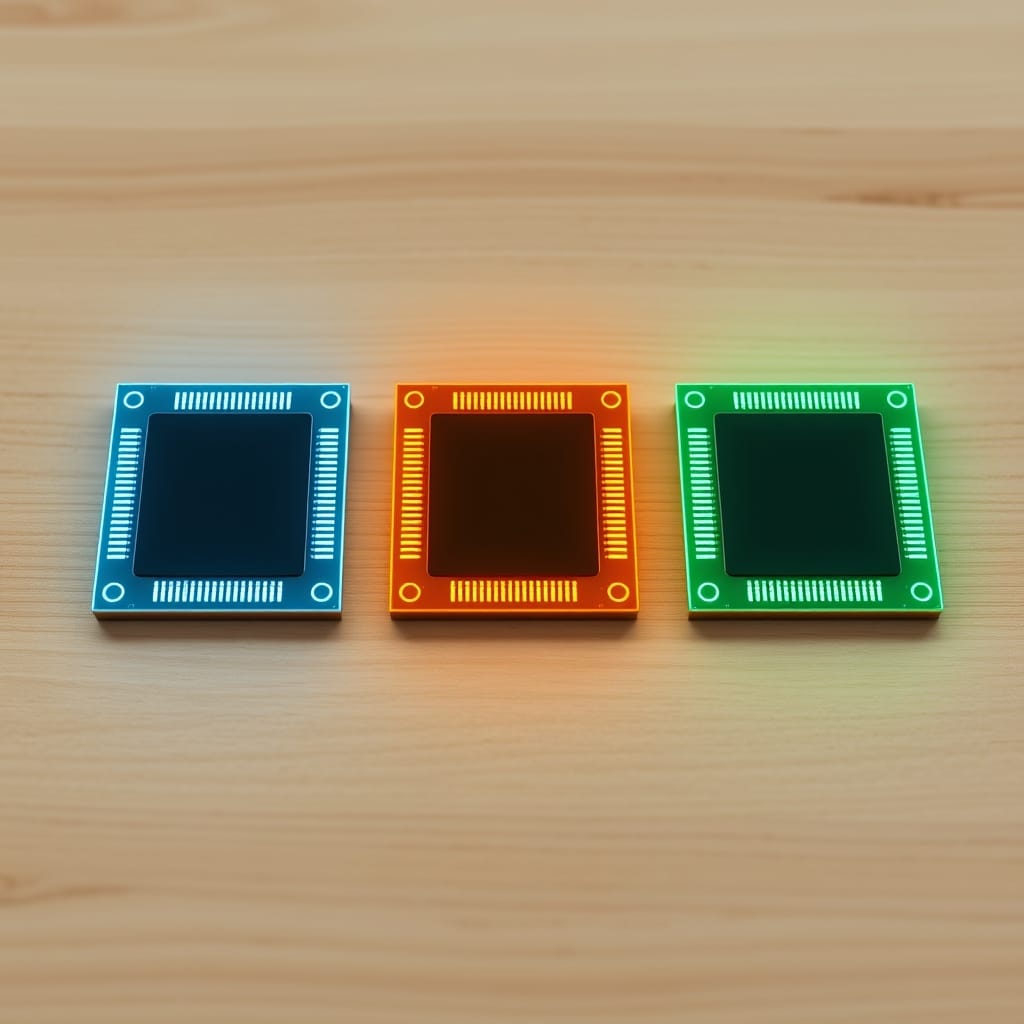

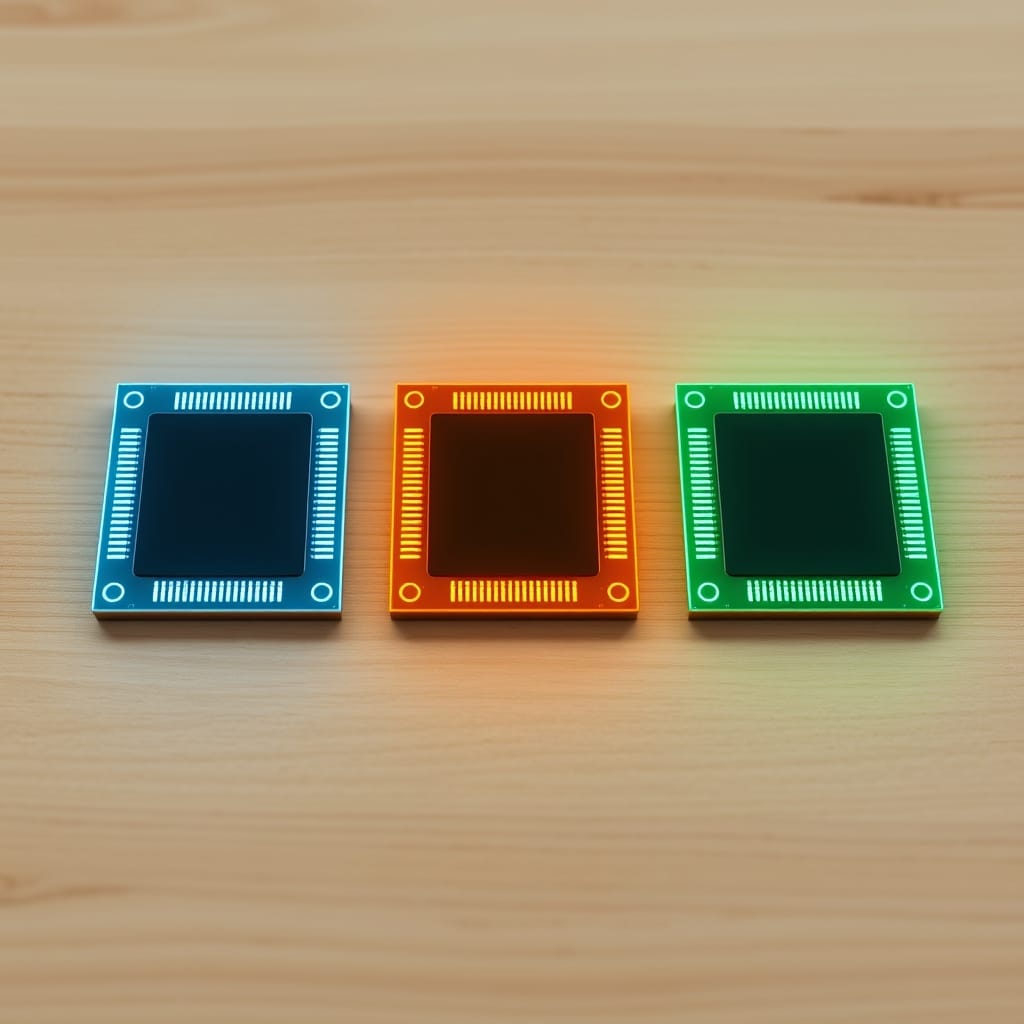

Three frontier models on the table at the end of February, three different bets about what "frontier" means. The interesting comparison isn't who wins, it's who fits which workload.

Three frontier models on the table at the end of February, three different bets about what "frontier" means. The interesting comparison isn't who wins, it's who fits which workload.

Reasoning tokens have to live somewhere. The somewhere is your context window. Worth working through what the budget actually looks like once you stop pretending output is the only thing that costs.

Anthropic shipped a model that knows when to think. The interesting question isn't the benchmark numbers, it's what happens when reasoning becomes a default behavior instead of a separate product tier.

An idea sketched two years ago assumed expertise-as-licensable-artifact would be a premium-tier product. The economic floor just dropped through that assumption. Worth re-examining what the idea was actually about now that the substrate has changed.

The open-weights frontier is genuinely usable from a home rig now. The bottleneck has moved, it isn't capability anymore, it's memory bandwidth and patience.

The DeepSeek-R1 number is small. The market reaction wasn't. The interesting bit isn't the dollar figure, it's which assumption it broke.

A week ago, an open-source reasoning model erased about a trillion dollars of market cap. Most of the takes from week one are already wrong. Here's what actually changed.

Meta released Llama 2 last week with a permissive commercial license. Five months after the leak made local inference possible, the legal layer just caught up to the technical one.

Anthropic shipped Claude 2 yesterday with a 100K-token context window. The capability is genuinely interesting; the architectural shift it forces is the bigger story.

ChatGPT plugins shipped a month ago. Function calling shipped last week. The substrate for agents that take action on your behalf just landed, and the second-order problems are going to be ugly.

If the goal is a working representation of how a specific person reasons in their domain, what's the actual stack? Walking through corpus, model, retrieval, eval, and where each piece breaks today.

The model providers scraped the open internet and called it fair game. The next phase needs an actual market for the data, and the structural pieces of that market don't exist yet.